Cheating prevention and detection

Last updated on • Disponible en Français

Screen takes cheating seriously, which is why we offer several ways to prevent cheating on your tests. Below you’ll see them broken into two sections — Prevention measures and Detection measures:

✅ You can enable and disable cheating prevention and detection measures during the test creation process.

ℹ️ Interested in webcam proctoring? You can find more information on that here.

Prevention measures

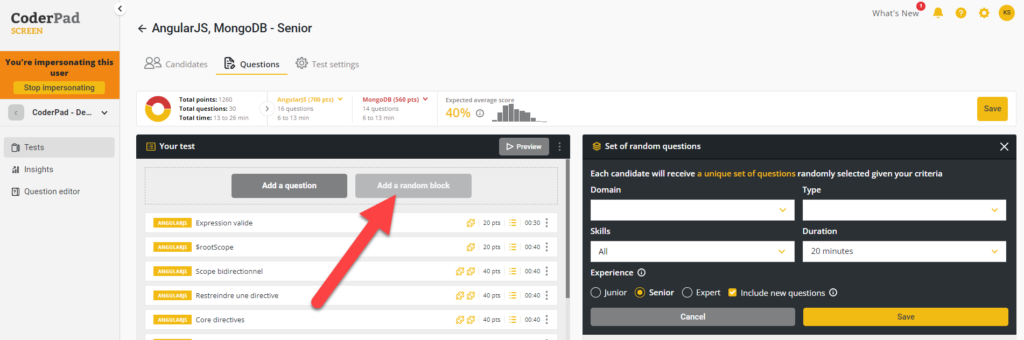

Random blocks

Random blocks automatically generates randomized groups of questions based on your test settings (role, domain, difficulty, etc.). This means that no two tests are the same, and makes it much harder for candidates to look for and/or leak solutions online.

Cheat warnings

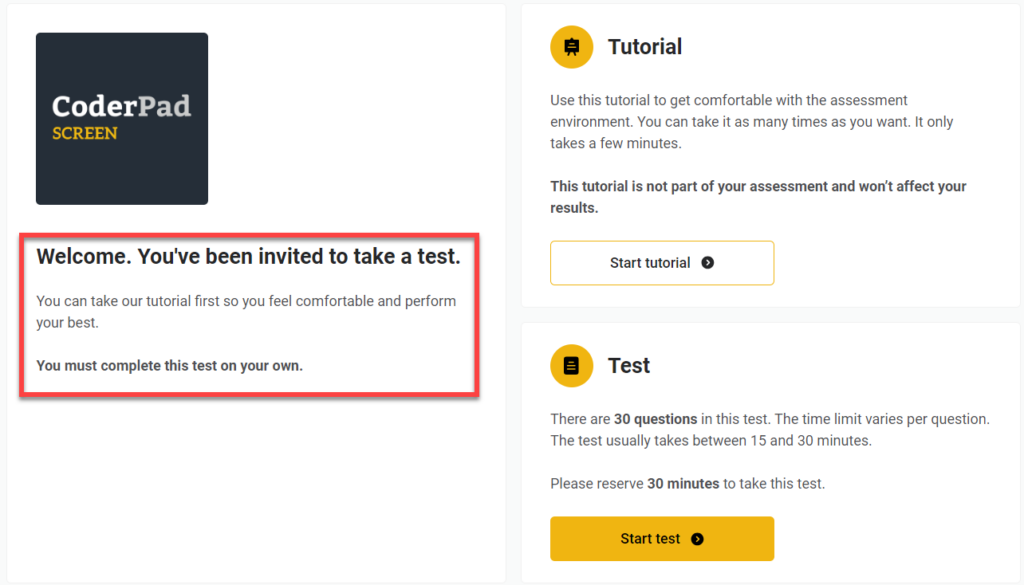

All candidates starting a test are presented with a welcome screen with a basic anti-cheating warning. You can customize the wording of the welcome screen to make this message more prominent if needed — instructions for editing that message can be found here.

Once the candidate starts the test, they’ll also receive a confirmation pop-up mentioning our cheat detection mechanisms and clarifying expectations.

Additionally, in the tutorial that candidates follow, they can see what the detailed report will look like (with code playback), which reinforces transparency about expectations.

Time limits

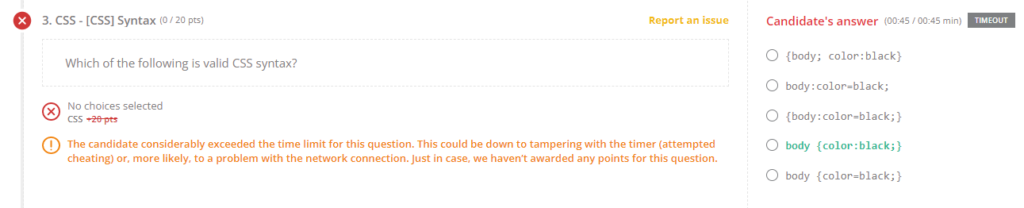

We allow you to set time limits for multiple choice questions so that candidates don’t have time to look up the questions on the internet.

This setting is enabled by default; you can find out more about editing the settings here.

Copy/pasting prevention

We automatically block copy/paste of the question statement both to prevent leaks and to make it harder to find the solution online.

Another feature we offer is to deactivate pasting text that was obtained outside the IDE.

⚠️ Before activating this paste-blocking feature, keep in mind that there are a lot of legitimate use cases for copy/pasting — like utilizing a more familiar IDE on their desktop, or working out the problem in a text editor like Notepad ++.

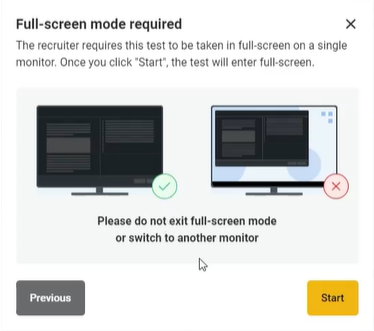

Full-screen mode

If enabled, candidates must enter full-screen mode before starting the test. If they exit full-screen mode or switch to another monitor, an alert will trigger after a 10-second grace period.

Detection measures

⚠️Suspicious behavior does not always indicate cheating behavior. We flag these behaviors so that you can investigate further to make an actual determination of cheating.

In other words, while you may receive notifications about problem behaviors encountered during a test, there are often legitimate reasons for candidates engaging in things like copy/pasting or taking an extra long time to answer a question.

Automatic detection

Screen is currently set up to detect the following potential cheating patterns:

- Plagiarism: Screen will recognize if a candidate submits the exact code previously submitted by another candidate and trigger a notification.

- Abnormal candidate performance: e.g., identifying difficult questions completed in a fraction of the usual time.

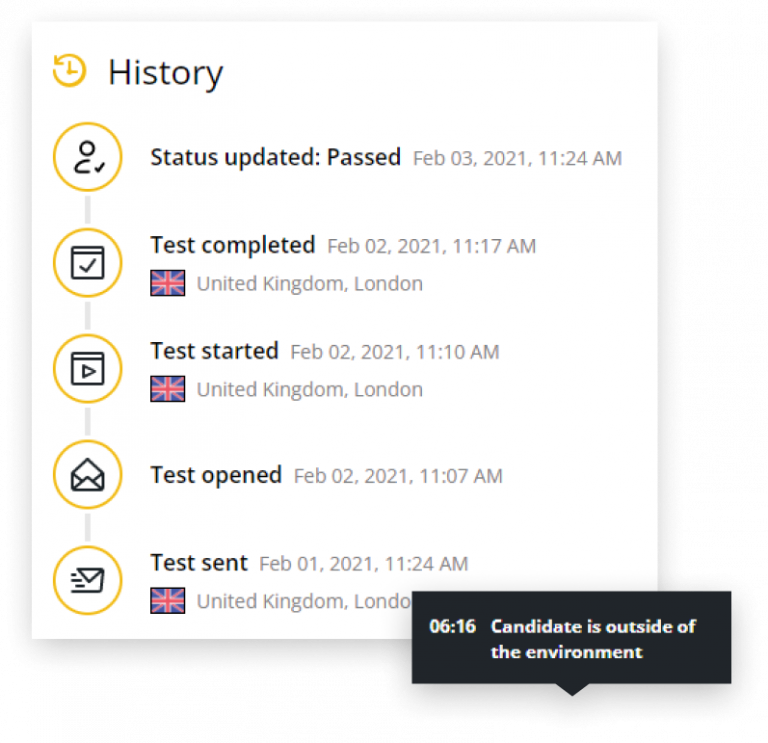

- Leaving the IDE: We can tell if candidates have left the test environment.

- Copy/pasting: and if they have copied & pasted code into the Screen IDE.

- Geolocation changes: We track candidates’ approximate geolocation to spot any unusual behavior, like logins from different locations or devices during the test, to help you determine if another person is taking the test for the candidate.

⚠️ There may be non-cheating related reasons why a candidate has different locations listed in their timeline, including using a VPN and temporarily being out of the country. Like the other warnings, you should investigate further before rejecting the candidate.

Additionally, we take the following actions to reduce the risks and impact of leaked questions and answers:

- We continually monitor the internet (Reddit, StackOverflow, Git, etc.) for leaked questions and take any necessary action to remove the leaked content as needed.

- Not only will we refresh any leaked or compromised questions, but we regularly update and refresh ALL our questions.

Cheating notifications

There are four places you’ll be able to review potential candidate cheating.

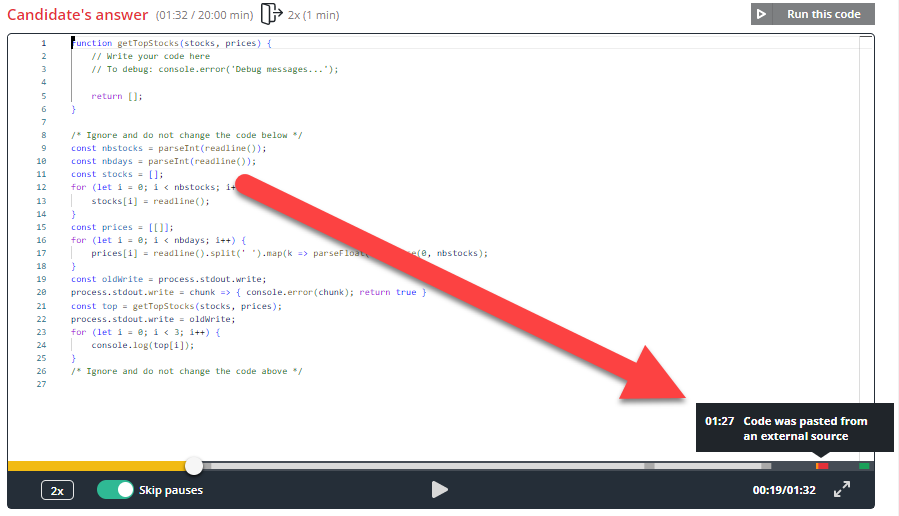

The first is the Code playback feature, which is a recording of the candidate’s screen as they work the programming questions. You can not only watch them build their answer, but you’ll be able to see when they left the coding environment and when they copy and pasted code:

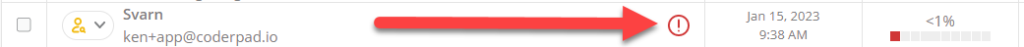

The second is in the candidate list, where you’ll see a red exclamation point inside a red circle if Screen has detected potential cheating.

The third is in your simplified candidate report. This is where you’ll receive plagiarism alerts, notifications of candidates leaving their IDEs, and any geolocation issues:

Lastly, if you click on the View detailed report button on the summarized report, you can see detected issues for individual questions:

✅ You can manually override the automatic scoring if you feel it is necessary.

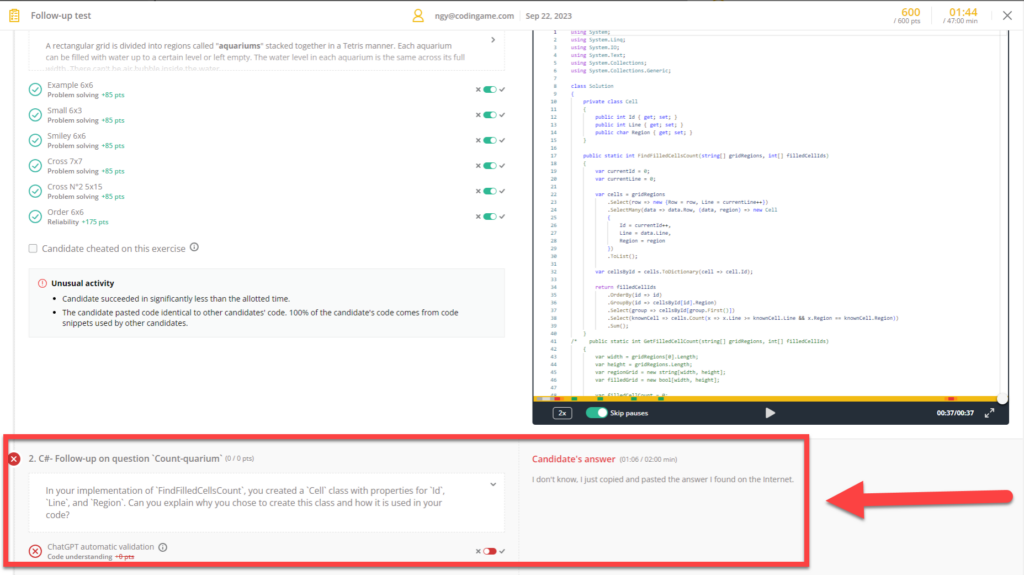

AI follow-up question

Another cheating prevention measure you can enable is an AI-generated and validated follow-up question. Our ChatGPT integration will generate a follow-up question asking a candidate to explain a piece of their code and then validate the answer.

✅ You can also enable this feature in the Test settings.

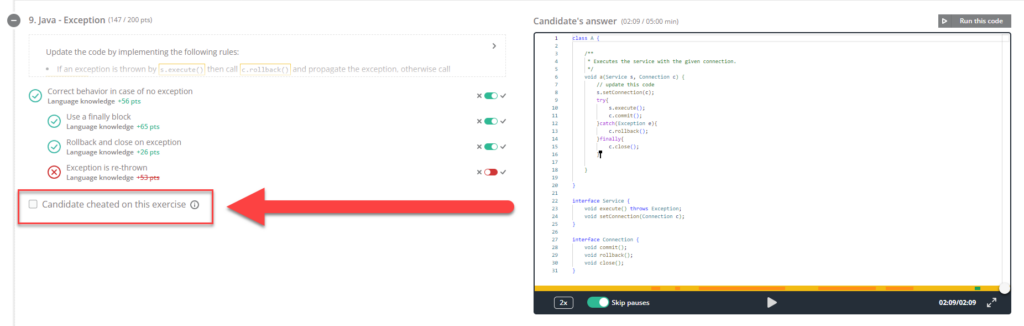

Cheat reporting

If you’ve made the determination that a candidate has cheated on a particular question, you can mark that by selecting the Candidate cheated on this exercise checkbox on the offending question.

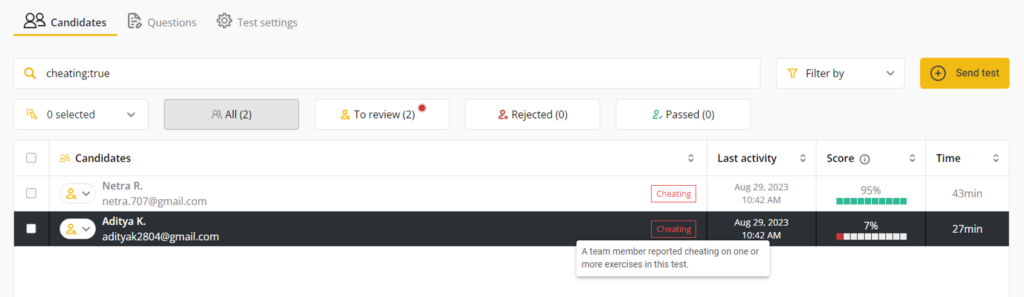

Checking this box will remove points for the exercise and add a filter to the candidate. Additionally, in the Candidates screen of the test, you’ll see a Cheated notification next to the candidate’s name.

ℹ️ For more information on our anti-cheat feature — including a few frequently asked questions — checkout our Anti-cheat product page.