How to Optimize MySQL Database Schema for Improved Performance

According to the TIOBE index, MySQL is listed among the top databases used in developing APIs for web and mobile applications. The performance and user experience of your app depend on the performance of your database. That is why database engineers and web developers are often required to know how to tune a database for optimal performance.

When tuning a slow database, we usually begin by optimizing the queries we write. But another way you can speed up your database is by optimizing your schema.

This guide presumes that you are an experienced engineer with basic knowledge of relational databases like MySQL, PostgreSQL, etc. By the end of this article, you will:

- Understand what a database schema is

- Understand the benefits of schema optimization

- Learn the different techniques that can be used to optimize a database schema

What is a Database Schema?

A database schema, or just schema for short, is a blueprint that represents the organization of data in your database. It represents the general structure of the logical features of a database including elements such as tables, fields, records, columns, triggers, functions, procedures, indexes, and keys as well as the relationships they have with one other.

Database schemas help with database optimization to drive faster query response times by aiding efficient retrieval of data. Database schema design refers to the various practices or techniques involved in constructing a database schema.

Good database schema design can be a deciding factor to measure query performance.

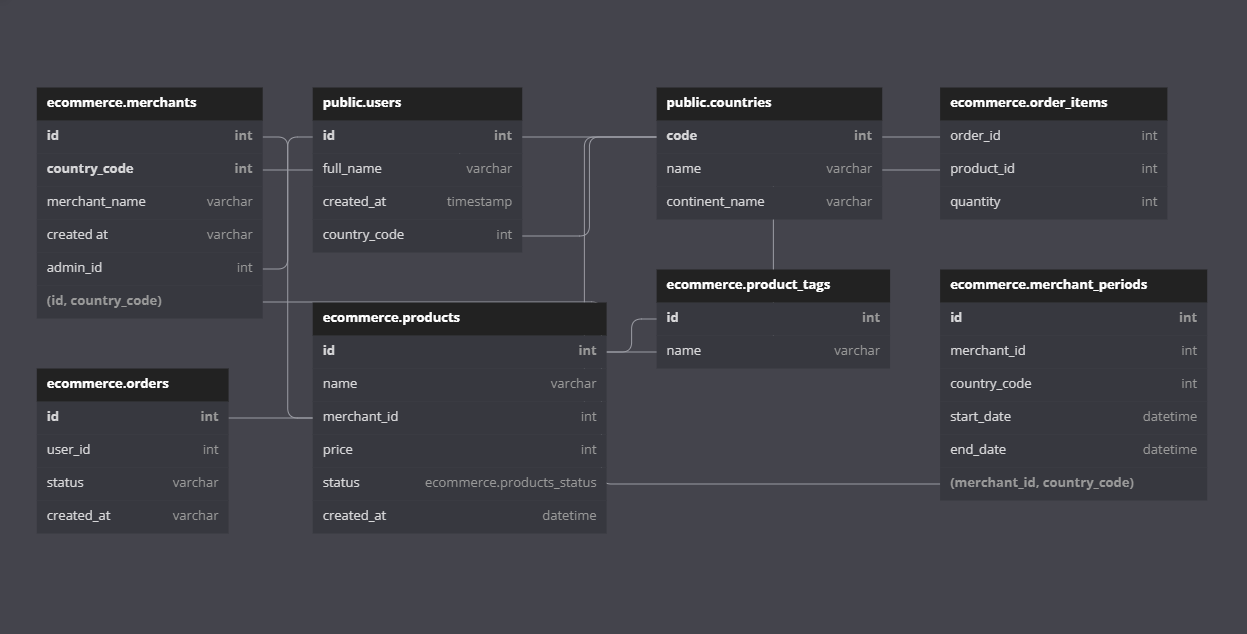

Entity Relationship Diagrams

While database schemas are used by database administrators to model and build the structure of a database, Entity Relationship Diagrams (ERDs) are used to visually represent the relationship between entities in your database. ERDs are much easier to understand by end-users and business owners.

ERDs are used to show the relationship between tables, which are usually represented in squares, and lines, which show primary and secondary key constraints between the tables. You will often find symbols and line connectors explaining the type of relationship between the entities (one-to-one, one-to-many, many-to-many).

Benefits of Good Schema Design

A poorly designed schema can significantly hurt database performance and query execution speed. Inefficiently organized data consume lots of system resources. They are also very confusing and hard to maintain by database administrators. This is why designing a good schema is important.

Some of the benefits of well-designed schemas include:

- Making your data easy to understand, analyze and interpret.

- Reducing and eliminating data redundancy.

- Preventing data inconsistency and inaccuracy

- Improving data access and query response time.

- Ensuring data security

- Ensuring data integrity and correctness.

How to Create a Database Schema in MySQL

There are several ways to create a schema in MySQL. You can easily use the MySQL Workbench Tool to create the schema; under the hood, MySQL Workbench creates an SQL file and runs it on your behalf. You can also create a SQL file in a text editor and define your schema in it. The most common approach, however, would be to make use of an existing ORM to define your schemas.

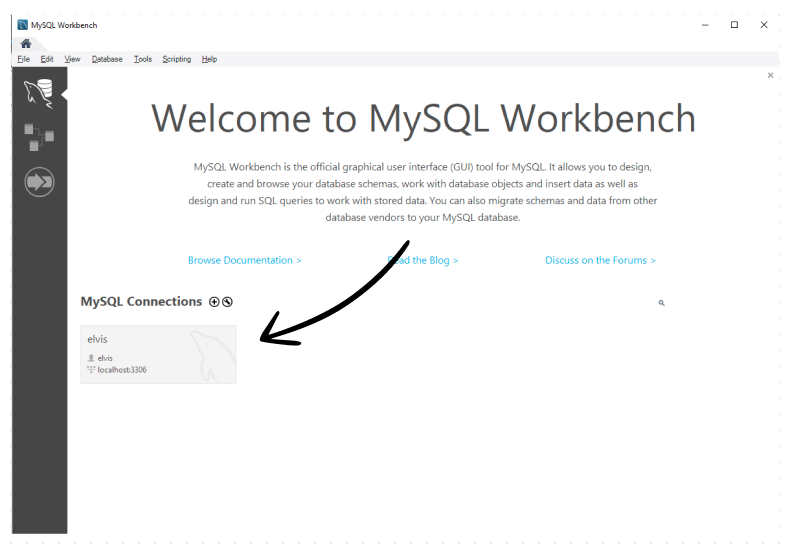

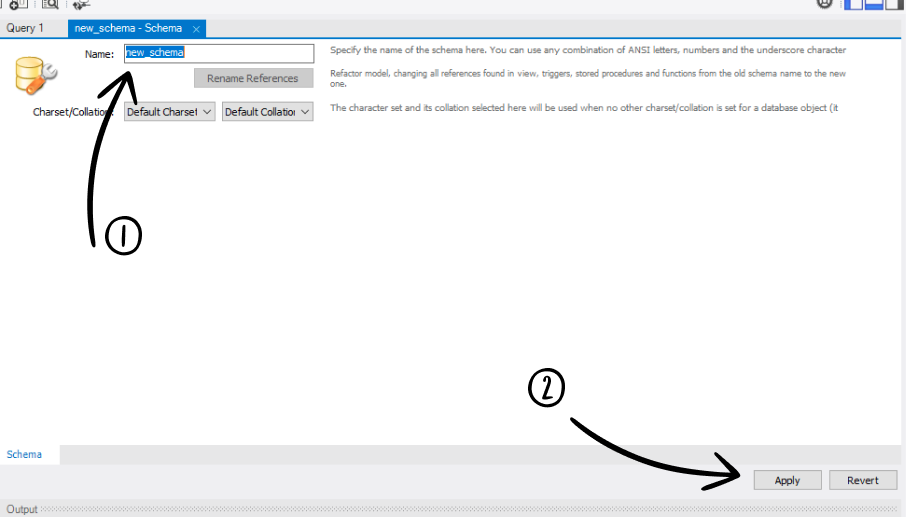

Using MySQL Workbench

To create a schema using MySQL Workbench, you can follow the following steps:

1. Open MySQL Workbench and connect to your MySQL instance.

Connecting to a new MySQL instance in MySQL Workbench

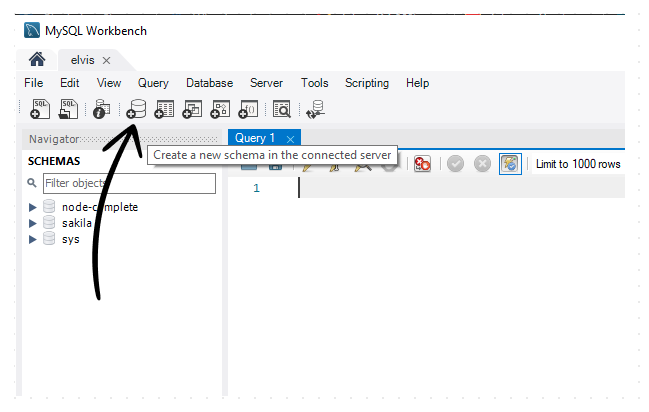

2. In the SQL Editor view, locate and click on the create schema button:

3. Enter the name for your schema and click the “Apply” button.

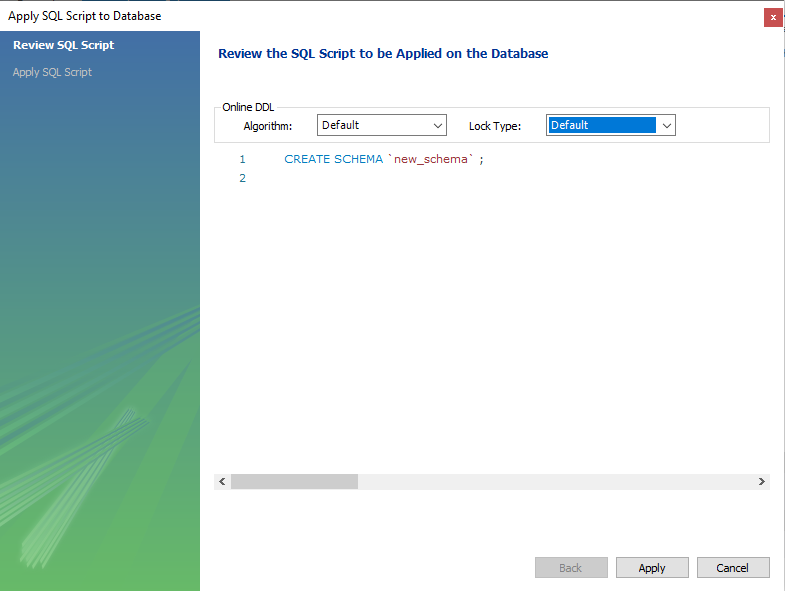

4. The next screen allows you to customize the schema by applying an SQL script. You can use this screen to define the tables, columns, constraints, indexes, etc.

5. Finally, you can click on “Apply” to execute the script.

Using a Terminal to execute a SQL script file

If you are a terminal freak like me 😂, you can avoid the steps above by creating a new SQL file to define your schemas. When done you can run a command to execute the SQL script file in this manner:

mysql -u yourusername -p yourpassword yourdatabase < sql_fileIf you’re already logged into the MySQL shell, you can run the following instead:

mysql> source /path/to/file/filename.sql;You can also run direct commands to create a database with tables and columns, etc as demonstrated in the following steps:

After logging in to the MySQL shell, create an ice_cream_shop database using this command:

mysql> CREATE DATABASE ice_cream_shop;Switch to the ice_cream_shop:

mysql> USE ice_cream_shop;Code language: PHP (php)How to Optimize a Schema for Performance

When designing a database schema, we have to ensure that it is optimized for performance. In this section, we discuss in-depth the different techniques you can use to do so.

Use Appropriate Naming Conventions

A lot of database admins and programmers often struggle with understanding the information stored in existing databases. This is mostly because of the naming conventions that were used to create the tables and columns.

A table name must describe the entity it represents and the column names must properly describe the properties of the entity. When you use confusing words for the table or column names, they become unreadable and you make it hard for that entity to be understood by others.

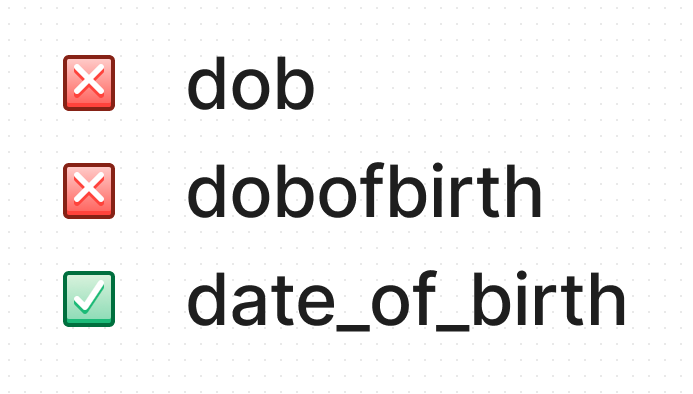

You can adopt the following rules to ensure proper naming convention in your database schemas:

- Avoid acronyms or short words for tables and column names. Remember not everyone may understand the acronym you use. Instead, try to write the names fully and clearly.

- Use underscores to separate words e.g.

date_of_birthinstead ofdateofbirth. - Avoid using reserved words for your tables and column names because they will lead to a syntax error.

- Avoid using special characters for your tables and column names.

- Table names should be in the singular form e.g.

inventoryinstead ofinventories. - Use simple words that inform the underlying data represented. For example, a table that represents cities in a country can be simply named

cityinstead ofcity_of_country.

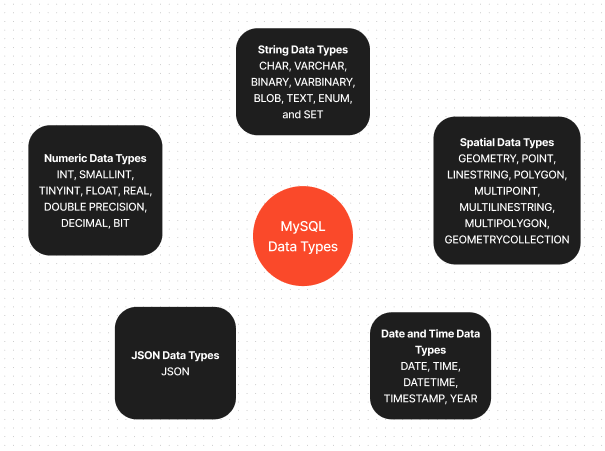

Use Correct Data Types

Using the wrong data types to represent data in your schema can increase disk space usage, and affect query performance and data retrieval speed. You should always use the proper types to describe the data you wish to store.

Thankfully, we are not short of options when it comes to picking the right data type as MySQL provides a large variety. You can follow the guidelines below when deciding on what data type to choose.

Aim for the smallest data type

A common mistake in schema design is using large data types to represent data. If you care about saving some disk space, you should consider going for the smallest data type that represents the data you’re trying to store.

An example is when you wish to save strings. Let’s say you have a column that saves usernames shorter than 30 characters. You can use the VARCHAR(30) to represent data in that column.

For text that may be longer, such as a user’s bio, you can limit the text to, let’s say, 255 characters and use VARCHAR(255).

For yes or no values, a CHAR(1) is significantly better and more performant than a VARCHAR(1). This is because the CHAR(1) has a fixed length of exactly 1 byte, whereas VARCHAR(1), because of its variable length nature, will have to store extra information about the length of characters which takes about 2 more bytes. You can also use the TINYINT type to represent booleans in MySQL.

Use the right data types

In SQL, it’s very possible to represent data in as many data types. But choosing the right one is the sweet spot to optimizing your schema.

An example (also a common question asked) is how to represent dates in SQL. MySQL provides the DATE, DATETIME, and TIMESTAMP types to represent dates. They are quite similar and often can be used to achieve the same thing, but still have quite a few differentiating factors. According to theofficial documentation,

- The

DATEtype is used for values with a date part but no time part. - The

DATETIMEtype is used for values that have both date and time parts in the ‘YYYY-MM-DD hh:mm:ss’ format. - The

TIMESTAMPis also used for values that have both date and time parts but it expects values in the range ‘1970-01-01 00:00:01’ UTC to ‘2038-01-19 03:14:07’ UTC.

So whenever you want to represent a field that contains only dates, you’ll be better off with the DATE type. But if you want to keep track of the time you can use the DATETIME or the TIMESTAMP data types. Another reason you may want to choose the DATETIME or TIMESTAMP types is if you need automatic initialization and updates to the current date and time for your data.

Avoid Nulls as much as possible

Another design flaw with database schemas is setting NULL as a default even when you don’t mean it. Columns that have NULL data types make it difficult for MYSQL to optimize queries that access them.

If you need to improve disk usage, data access, and query speed a little, you should instead use NOT NULL as default if you are sure that there won’t be any NULL values in a column.

Enforce Referential Integrity (Constraints)

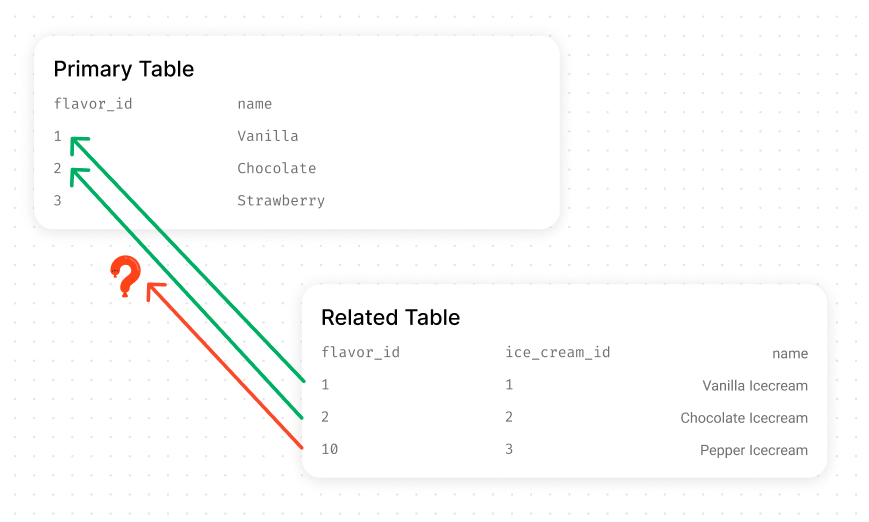

Referential integrity is a data quality concept that prevents data inconsistency and loss. It can be used to ensure that changes in a table reflect in other tables. It is also used to enforce constraints across tables in your database.

For example, a primary key constraint enforces that a primary key of a table cannot be null when referenced. You can also create constraints between tables by matching the primary key of a table with the foreign key of another table.

Failure to add these constraints will make data integrity dependent on business logic only, making it prone to human error.

The following example shows how we can enforce referential integrity with foreign keys:

Log into your MySQL shell from your terminal:

sudo mysql -u root -pCreate a blog database using the following command:

mysql> CREATE DATABASE blog;Switch to the ice_cream_shop:

mysql> USE blog;Code language: PHP (php)Our database is quite small and only has two tables, an author table, and a post table. Both tables share a one-to-many relationship. This means that:

- An

authorcan create manyarticles. - An

articlecan only belong to oneauthor.

We will create the two tables, define the columns for each table, and link them together. But before we dive into that, we have to make sure the design meets the following constraints:

- We create the tables using the

InnoDBdatabase engine. - We must index the columns that will hold the foreign and primary keys.

- We also need to ensure that the data types of the related columns are the same.

Creating the author and post tables

The following example shows how we would create the two tables, add constraints and establish the 1-n (One-to-many) relationship between them.

CREATE TABLE author (

author_id INT NOT NULL AUTO_INCREMENT PRIMARY KEY,

first_name VARCHAR(50) NOT NULL,

last_name VARCHAR(50) NOT NULL

);

CREATE TABLE article (

article_id INT NOT NULL AUTO_INCREMENT PRIMARY KEY,

author_id INT NOT NULL,

title VARCHAR(50) NOT NULL,

INDEX (author_id),

FOREIGN KEY (author_id) REFERENCES author(author_id)

);Code language: PHP (php)The following example shows another way you can create a 1-n (one-to-many) relationship between tables in MySQL using a composite key:

CREATE TABLE author (

first_name VARCHAR(50) NOT NULL,

last_name VARCHAR(50) NOT NULL,

PRIMARY KEY (first_name, last_name)

);

CREATE TABLE article (

id INT NOT NULL AUTO_INCREMENT PRIMARY KEY,

title VARCHAR(50) NOT NULL,

author_first_name VARCHAR(50) NOT NULL,

author_last_name VARCHAR(50) NOT NULL,

FOREIGN KEY (author_first_name, author_last_name) REFERENCES author("first_name", "last_name")

);Code language: PHP (php)The relationship is one-to-many because the foreign keys we defined in both examples lack the UNIQUE constraint on them. This is the main difference between a 1-1 and 1-n relationship in SQL.

For our example, we will use the first method since we do not need to create a composite key, also it’s far easier to type. Run the command in the first example to create the tables.

Next, we add some authors to our blog database:

mysql> INSERT INTO author (first_name, last_name) VALUES ('Elvis', 'Duru');

mysql> INSERT INTO author (first_name, last_name) VALUES ('Howard', 'Lovecraft');Code language: JavaScript (javascript)We can confirm the inserts by inspecting the table’s records:

mysql> SELECT * FROM author;

+-----------+------------+-----------+

| author_id | first_name | last_name |

+-----------+------------+-----------+

| 1 | Elvis | Duru |

| 2 | Howard | Lovecraft |

+-----------+------------+-----------+

2 rows in set (0.00 sec)Code language: JavaScript (javascript)Let’s add some data to our article table. Run the following:

mysql> INSERT INTO article (title, author_id) VALUES ("The Call of Cthulhu", 2);

mysql> INSERT INTO article (title, author_id) VALUES ("The Shadow Over Innsmouth", 2);Code language: JavaScript (javascript)Just as we did for the authors, we can confirm that we inserted our data correctly:

mysql> SELECT * FROM article;

+------------+-----------+---------------------------+

| article_id | author_id | title |

+------------+-----------+---------------------------+

| 1 | 2 | The Call of Cthulhu |

| 2 | 2 | The Shadow Over Innsmouth |

+------------+-----------+---------------------------+

2 rows in set (0.00 sec)Code language: JavaScript (javascript)Normally, if we were to query data to be sent to an application, we would probably use a JOIN query to combine the data in related tables as follows:

mysql> SELECT * FROM author INNER JOIN article ON author.author_id = article.author_id;

+-----------+------------+-----------+------------+-----------+---------------------------+

| author_id | first_name | last_name | article_id | author_id | title |

+-----------+------------+-----------+------------+-----------+---------------------------+

| 2 | Howard | Lovecraft | 1 | 2 | The Call of Cthulhu |

| 2 | Howard | Lovecraft | 2 | 2 | The Shadow Over Innsmouth |

+-----------+------------+-----------+------------+-----------+---------------------------+

2 rows in set (0.00 sec)Code language: JavaScript (javascript)Note that using an INNER JOIN will only select rows that have data on both sides of the relationship. To select all the rows, you can use a LEFT JOIN or RIGHT JOIN.

+-----------+------------+-----------+------------+-----------+---------------------------+

| author_id | first_name | last_name | article_id | author_id | title |

+-----------+------------+-----------+------------+-----------+---------------------------+

| 1 | Elvis | Duru | NULL | NULL | NULL |

| 2 | Howard | Lovecraft | 2 | 2 | The Shadow Over Innsmouth |

| 2 | Howard | Lovecraft | 1 | 2 | The Call of Cthulhu |

+-----------+------------+-----------+------------+-----------+---------------------------+

3 rows in set (0.00 sec)Code language: PHP (php)Finally, the most important bit. Let’s test the constraint rules we defined by inserting an article with an invalid author_id like 7:

mysql> INSERT INTO article (title, author_id) VALUES ("The Book of MySQL Errors", 7);

ERROR 1452 (23000): Cannot add or update a child row: a foreign key constraint fails (`blog`.`article`, CONSTRAINT `article_ibfk_1` FOREIGN KEY (`author_id`) REFERENCES `author` (`author_id`))Code language: JavaScript (javascript)MySQL performed a referential integrity check which our query failed to obey and this resulted in an error thrown by the MySQL server.

Because of the rules we have set up in our schema, we have prevented human error from ruining the data integrity of our database, as well as prevented data loss. This is the main reason why you need to enforce referential integrity in your databases.

Obey the Rules of Normalization

Normalization is a database design technique that involves efficiently organizing data to eliminate data redundancy and correct data dependency. The concept of normalizing data was developed by E.F.Codd in 1970 [1]. Knowing and applying the principles of normalization can drastically improve your databases’ performance.

Normal Forms

Normal Forms are a series of guidelines that ensure data is normalized. The steps mentioned in the guidelines are usually done in sequence, one form before the next.

There are about seven (7) types of normal forms: First Normal Form (1NF), Second Normal Form (2NF), Third Normal Form (3NF), Boyce-Codd Normal Form (BCNF), Fourth Normal Form (4NF), Fifth Normal Form (5NF) and the Domain-Key Normal Form (DKNF).

In practical database design, we mainly use up to the Third or occasionally the Fourth Normal Form. You rarely need to worry about the others, so I have skipped them. But if you want to read about the others, check out this resource.

First Normal Form (1NF)

The First Normal Form lays the foundation for organizing a database. A relation achieves 1NF when it has only single viewed attributes (neither repeating). The rules to achieving that include:

- Eliminate duplicate columns from the same table

- Ensure that each column contains only one value (no arrays permitted)

- No tables should have repeating groups of related data. Create individual tables for each group of related data, and identify each row with a unique column or set of columns (PRIMARY key)

Second Normal Form (2NF)

The Second Normal Form continues where the First Normal Form rules stop. It further addresses the removal of duplicate data.

A relation achieves 2NF when every non-key attribute is functionally dependent on the primary key. To achieve this:

- You must first meet the requirements of the First Normal Form

- Place subsets of data that apply to multiple rows in their own separate tables so that dependency can be preserved.

- Use foreign keys to establish relationships between the new tables and their predecessors.

Third Normal Form (3NF)

A table satisfies the Third Normal Form when:

- It meets the requirements of the Second Normal Form and

- All the columns in the table are fully dependent on the primary key.

The example below shows a table representing data that needs to be normalized:

| Title | Author | Price | Genre | Customer | ISBN | Order |

| The Call of Cthulhu | Howard Lovecraft | 99.99 | Horror | Elvis Duru | 9781624650444 | BK-11212121 |

| Things Fall Apart | Chinua Achebe | 78.99 | Historical Fiction | Billie Jean | 9780385474542 | LD-12133311 |

| The Shadow Over Innsmouth | Howard Lovecraft | 89.99 | Horror | Shania Leslow | 9781450562799 | MJ-12901991 |

If we apply the First, Second, and Third Normal Form rules to our database design, we would have the following results:

Book table

| book_id (primary key) | title | isbn | price | author_id (foreign key) | genre_id (foreign_key) |

| 1 | The Call of Cthulhu | 9781624650444 | 99.99 | 1 | 1 |

| 2 | Things Fall Apart | 9780385474542 | 78.99 | 2 | 2 |

| 3 | The Shadow Over Innsmouth | 9781450562799 | 89.99 | 1 | 1 |

Genre table

| genre_id (primary key) | name |

| 1 | Horror |

| 2 | Historical Drama |

Author table

| customer_id (primary key) | first_name | last_name |

| 1 | Howard | Lovecraft |

| 2 | Chinua | Achebe |

Customer table

| customer_id (primary key) | first_name | last_name |

| 1 | Elvis | Duru |

| 2 | Billie | Jean |

| 3 | Shania | Leslow |

Order table

| order_id (primary key) | order_number | book_id | customer_id (foreign key) |

| 1 | BK-11212121 | 1 | 1 |

| 2 | LD-12133311 | 2 | 2 |

| 3 | MJ-12901991 | 3 | 3 |

Boyce-Codd Normal Form (BCNF or 3.5NF)

A table satisfies the Boyce-Codd Normal Form when:

- It meets the requirements of the Third Normal Form and

- Every determinant is a candidate key

Fourth Normal Form (4NF)

A table satisfies the Fourth Normal Form when:

- It meets the requirements of BCNF and

- It has no multivalued dependency.

Pros of a Normalized Schema

- A well-normalized database allows faster updates compared to denormalized databases

- Normalized tables are generally smaller than denormalized tables, which makes them occupy less storage and perform better.

- You don’t have to use

DISTINCTorGROUP BYin your queries when fetching data anymore because there’s a lack of redundancy. This speeds up query response times.

Cons of a Normalized Schema

- Most queries that retrieve data from normalized tables often involve joins and joins can be expensive compared to normal single table select queries

- Some columns may perform better when placed within an index in the same table, but due to normalization, these columns are usually placed in separate tables.

Should I Normalize My Database?

Based on the pros and cons of both normalized and denormalized databases, we can deduce that there’s no one fit for normalization. You can play with both approaches and stick with what works best or even mix the two. If you find your database queries performing slow or you are experiencing storage issues, it could be that you have redundant data present in your database. This is a good case to optimize your schema by normalizing it.

Some Final Remarks

Good database design is critical to the success of a DBMS. It makes life so easy for you, the database admin, your end-users, and even the MySQL server. Luckily for us, MySQL has evolved and provides many great tools and features that we can utilize when designing optimal databases.

A well-designed schema is a foundation for MySQL database performance. Schema optimization can make your queries faster by reducing the amount of traffic and time required to query your database. In a previous article, we explained various techniques on how to optimize query performance in MySQL. If you have not read that article, I recommend you do so. In our next article, we will talk about indexes and how they can affect the performance of your database.

I’m Elvis Duru. I create helpful content for web developers with a focus on React, Node.js, SQL/NoSQL Databases, and more! Let’s connect on Twitter.

References

[1] E. F. Codd. A Relational Model of Data for Large Shared Data Banks. Communications of the ACM, 13(6):377-387, Jun 1970.